Today we’ll pick up where we left off in Part 1. The focus for this part of the article is to add a second Lync Standard Edition Pool for disaster recovery purposes. When we finish the environment, will have two Lync Standard Edition Pools in two different sites as shown below.

Although this pools main purpose is disaster recovery, we can still use it to provide Lync services for our users. If possible I’d recommend splitting your users between the two pools evenly, that way only half of the users are impacted by an outage, and you know the “backup” pool is working at all times. This type of deployment is commonly referred to as “Active/Active”; meaning both servers are servicing users and managing some of the workload. I prefer “Active/Active” to “Active/Passive” (where one pool is just waiting for workload) because you are getting some use out of the hardware and you know it is working if you need to fail over to it.

When you plan for “Active/Active” scenarios, it is critical to scale appropriately. This means that each server/pool should be scaled to support all of the users at any given time. This allows you to provide appropriate resources in the event of a failover. If you’re deploying Enterprise pools, you also should scale to at least N+1.

Now that we’ve covered all of that, we’ll start by installing pre-requisites on our new server. We’ll use the same command as before and then reboot the server:

Add-WindowsFeature MSMQ-Server,MSMQ-Directory,Web-Server,Web-Static-Content,Web-Default-Doc,Web-Scripting-Tools,Web-Windows-Auth,Web-Asp-Net,Web-Log-Libraries,Web-Http-Tracing,Web-Stat-Compression,Web-Default-Doc,Web-ISAPI-Ext,Web-ISAPI-Filter,Web-Http-Errors,Web-Http-Logging,Web-Net-Ext,Web-Client-Auth,Web-Filtering,Web-Mgmt-Console,Web-Asp-Net,Web-Dyn-Compression,Web-Mgmt-Console,Windows-Identity-Foundation,rsat-adds,telnet-client,net-wcf-http-activation45,net-wcf-msmq-activation45,Server-Media-Foundation

Once the server comes back up, we’ll need to log on and start the Lync install; we won’t jump into Step 1 though. Our first step will be to run the “Prepare First Standard Edition server” option. Although this isn’t our first SE server, we still need to run this to create the appropriate SQL instances for our new pool to host the CMS. If you forget this step, adding it later isn’t much fun, so please make sure to run this first if you plan to host CMS on a Standard Edition server.

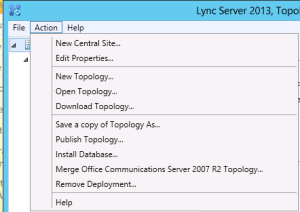

Next we’ll add our new site and new server in Topology Builder and pair it with the existing Front End. I won’t go through everything step by step, but for the highlights, we’ll begin by clicking “Lync Server” at the top of the topology and then going to “Action”>”New Central Site

When you finish creating the second site, the “New Front End Pool” wizard will open and you can run through the steps to create your new pool. Tip: Don’t forget to update your “External Base URL” to a public name that doesn’t match your pool name.

Once the pool is complete we can right click on either pool in our topology and choose “Edit Properties”

Under the “Resiliency” heading, we will check the box for “Associated backup pool” and select the server in the opposite location. We’ll also want to check the “Automatic failover and failback for Voice” option. This will populate the failure detection and fail back intervals with default values.

These values tell Lync how long the opposite pool should be down before users are allowed to register against their backup registrar (5 minutes/300 seconds), and how long their primary pool should be up before they can fail back. In most scenarios I shorten the failure detection interval so voice services are restored more quickly. The minimum value you can put here is 30 seconds (which is what I typically use). I normally leave the default for failback so if a server is in some type of crash loop, users don’t get moved back to it before the Lync services can be disabled.

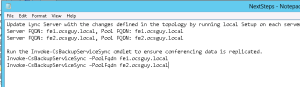

Once all the settings above are configured as desired, we can publish the topology and begin our server build on our new Standard Edition Front End Pool FE2.ocsguy.local. If you happen to open your “To Do” list after publishing you will see that you need to run local Setup (Step 2) on the first front end, as well as all steps on the new front end. Also, once you complete those steps you should run the Invoke-CSBackupServiceSync command against both pools to force an update.

I won’t walk through the rest of the setup steps on FE2 since they are the same as the last article with the normal “Next, Next, Finish” dance. The one thing you may notice is the “OAuth” certificate will already be present on your new Front End in Step 3, which is expected. Just make sure to run Step 2 on your first Front End as new services and database settings will be pushed to it during that step.

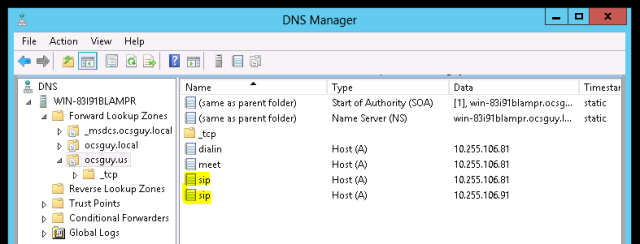

One other thing of note, we’ll want to create a second A record for SIP.ocsguy.us pointing to our new server. This will allow clients to use DNS load balancing in case of a pool outage.

Now on to the real fun…

Lync Server 2013 added data replication for user information to the DR procedure. This means when you checked the “Associated backup pool” box a few steps back, that the server you selected as a backup will get a copy of the other server’s persistent user data.

Assuming all of the steps for the installer have been run on both servers, and the Invoke-CsBackupServiceSync commands have run, we can now test our failover. Prior to doing that though, I’m going to check my pools to verify they show in sync with the Get-CsBackupServiceStatus command:

I ran this command:

Get-CsBackupServiceStatus -PoolFqdn fe1.ocsguy.local | Select-Object -ExpandProperty BackupModules

As you can see from the screenshot above the data replication is working.

To test, I begin by turning of FE1.ocsguy.local. At this point all users who were hosted on FE1 will disconnect for 30 seconds and then see the limited services due to a server outage banner in the Lync client. Calls will still process to and from users, but the users contact list will disappear and meetings hosted on FE1 will be down.

Now I RDP into FE2.ocsguy.local, open the Lync Management shall and Invoke Management Server Failover:

Invoke-CsManagementServerFailover –BackupSqlServerFQDN fe2.ocsguy.local –BackupSQLInstanceName RTC –Force

This will prompt us to continue. I type “A”, and then press Enter:

That moves the CMS to the backup server. If we had an edge server and the failed pool was the next hop for it, we would need to run the Set-CsEdgeServer command to change it’s next hop to the backup pool (more info here in step 1)

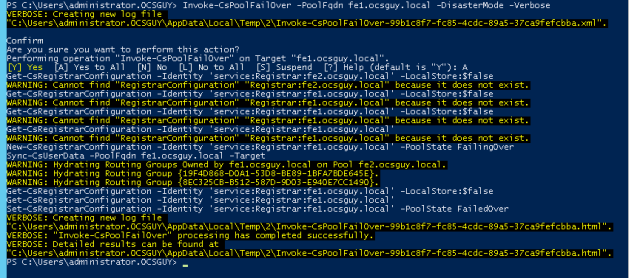

Next I run the “Invoke-CsPoolFailOver” command to start the disaster recovery

This command will look like this:

Invoke-CsPoolFailover -PoolFQDN fe1.ocsguy.local -DisasterMode -Verbose

You will be prompted to continue.I typed in “A” and hit Enter.

As long as there aren’t any errors you’re now failed over. Next we need to update our internal simple URLs (meet.ocsguy.us and dialin.ocsguy.us) so users can join meetings again.

Last but not least I verify my Lync client can sign in from a Windows 8 machine without the red banner.

Once the problem is resolved and our original pool is back online, we can begin the failback process. I’ll start by moving the user services back with the command below:

Invoke-CsPoolFailBack -PoolFqdn fe1.ocsguy.local

You will be prompted to continue.I typed in “A” and hit Enter.

You will see lots of information in the Lync Management Shell. Verify there are no errors (red text) and if there are, review logs to determine the cause.

Once all the users are moved back we can leave the CMS on FE2 as there is no real reason to move it back. I have sent an email off to the Lync documentation team asking for more information on this and hope to have some more detail soon.

Summary:

I want to say a little about the process behind all of this before I wrap this part of the article up.

First of all, you can only pair like pools, meaning Standard Edition can be paired with Standard Edition, and Enterprise Edition can be paired with Enterprise Edition. However you cannot pair a Standard Edition pool with an Enterprise Edition Pool. I’m not sure if Topology Builder will stop you from doing this, but I do know the product group didn’t test it so it isn’t supported.

Second, pairing is now reciprocal, meaning a pool can be paired only with one other pool, and that pairing is two-way. In our case this means FE1 is paired only with FE2, and vice versa. They cannot pair with any other pools.

Third, there is a new service installed once we a pair pools named “Lync Backup Service” that replicates persistent data from one pool to another. This is what allows things like the contact list, meeting information, and call forwarding settings to be restored to the backup pools in the event of the primary pool loss. If the pool hosting the CMS is being paired, then the CMS will also be replicated to the reciprocal server. This means you can failover the CMS as well in the event of a pool or datacenter loss.

Since I’ve started talking about disaster recovery, I might as well mention Recovery Time Objective (RTO) and Recovery Point Object (RPO). A RTO of 15 minutes means that all services will be restored within 15 minutes of a disaster being declared, and recovery work starting. A RPO of 15 minutes means the data that will be restored is no more than 15 minutes old.

Lync Server 2013 has a target RTO and RPO of 15 minutes for a pool with 40,000 concurrent users (this would be Enterprise Edition). This means we should expect to have a similar RTO and RPO for a Standard Edition deployment. Keep in mind the clock on the RTO doesn’t start until a disaster is declared and administrators can start fixing the problem.

That’s it for part 2, stay tuned for part 3 this coming week.

Ocsguy is THE guy. 😉

Nice Post Kevin. You mentioned that you needed to update the Lync simple URLs after doing a failover but what happens when all is running normally in an Active/Active scenario with respect to meetings and dialin urls – would you not want to use unique simple urls for each pool so that external users connect to the appropriate pool for meetings and dialin settings? Or can you use the same simple URLs for both Lync Servers and let Lync redirect the user to the appropriate pool where the meeting/user is homed (assuming that is actually what happens or is supported)

Same question for the Lync External Web Services url. Technet says the FQDN should be unique for each Pool but it appears the topology builder accepts the same value for both pools and allows you to sucessfully publish. It would be much easier to configure a common external web services url, for example, LyncWebExt.contoso.com, for a paired set of Pools but I have not tested to see if this actually works in allowing users to download the appropriate address book files and meeting content if they are directed to the wrong pool. Perhaps they are automatically directed back to the appropriate running pool in this case. Technet makes reference to using GeoDNS to handle DR scenarios where simple urls and web services are concerned – http://technet.microsoft.com/en-us/library/gg398758.aspx

Curious on your thoughts on this.

Best regards,

Dino

Hi Dino,

The simple URLs are not pool specific so they can be moved to any director or FE in the topology at any time (with just a DNS change). When someone joins a meeting they go to the meet page, which actually redirects them to the External Web Services FQDN of the pool hosting the meeting (after a lookup on the backend). With that being said, if you didn’t have unique webservices FQDNs for each pool you would break meeetings on at least 1 of the pools if not both.

Hope that helps clear it up!

Kevin

Thanks. Something to consider is if pools were in close geographic proximity you could use the same simple urls for both pools and leverage DNS round robin. In the event of a pool failure you would just remove the record for the failed pool. If they they were far apart, say North America and Europe, you might want use unique simple urls to direct the user to the right reverse proxy in the first place ( if you deployed one in each site.) However you would still need to update Dns in the event of a site failover. Technet talks about using GeoDNS to deal with this if available.

Hey Dino,

All good things to think about, but from a what I’ve done in real world global deployment scenario we tend to not use DNS round robin and move to scenario where the A records are more strictly controlled. Then you can do different simple URLs that are location specific if you have multiple domains, but if the sign-in address/sipdomain is just one name we tend to just do the single set of simple URLs. In most cases setting the TTL on the DNS very low (60 minutes) gets us in far under the RTO and provides a more controlled and predictable scenario.

HTH

-kp

Yes Indeed. Its great being able to discuss and vet more advanced deployment considerations.

Thanks again,

D